We have an On-Prem Matomo instance that is tracking ~2500 websites. We are running Matomo 4.14.2 currently but have been experiencing this issue with several previous versions as well.

PHP: 8.1.21

MySQL: 8.0.34

NGinX: 1.24.0

Database is large: 210 G of space

We have a call to the Matomo API from our platform to create new sites when requested for tracking and it just times out and never completes. We also have other API calls to purge sites if we purge them from our systems and it appears those will timeout as well.

Thinking maybe the database as a whole needs some indexing put into place to help queries for these API calls to run smoother?

We do have the system set to also delete old raw data older than 30 days, but I’m unsure that actually is running successfully at this point.

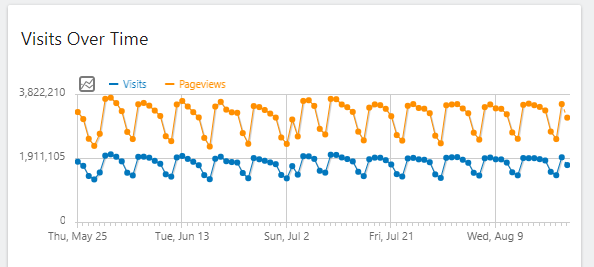

Overall, site traffic is very high. Averaging just under 4 million page views a day during the week and around 2 million on weekends that we are tracking.

I’m curious if there are any other on-prem users out there that have large traffic like this that can maybe help with any database optimizations or anything?

Thanks

Grant